Blog

-

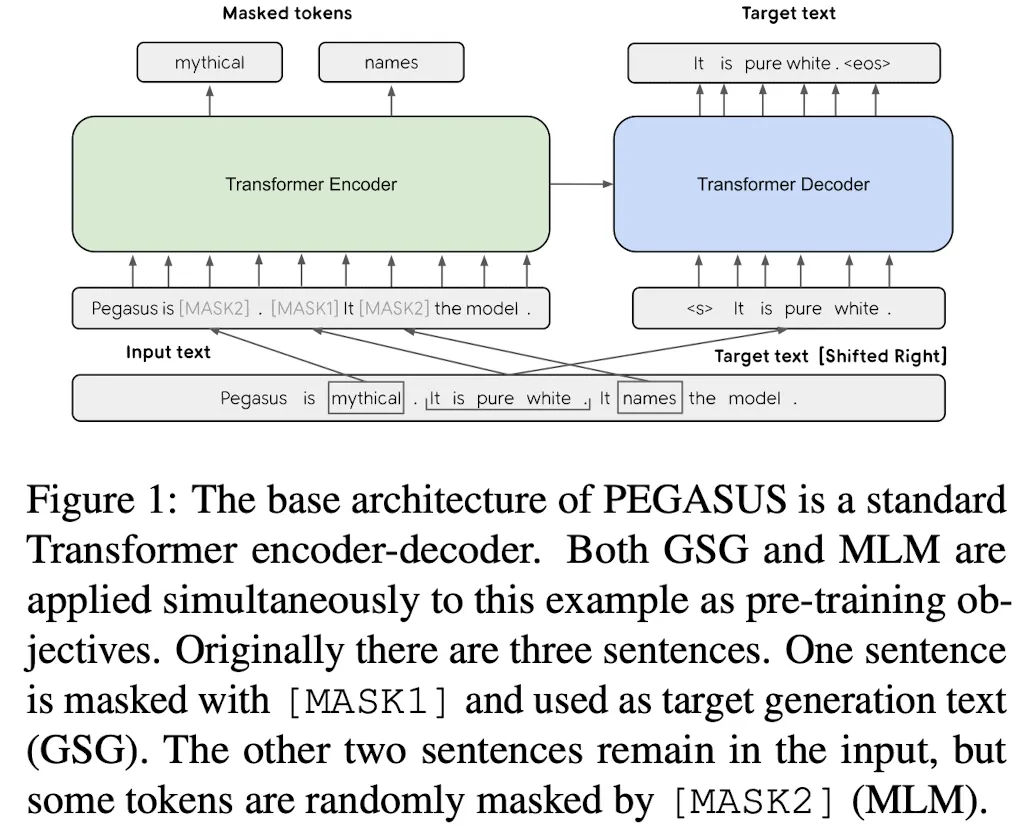

How good is the SOTA summarization?

Estimating the performance of the latest SOTA language model in summarization.

-

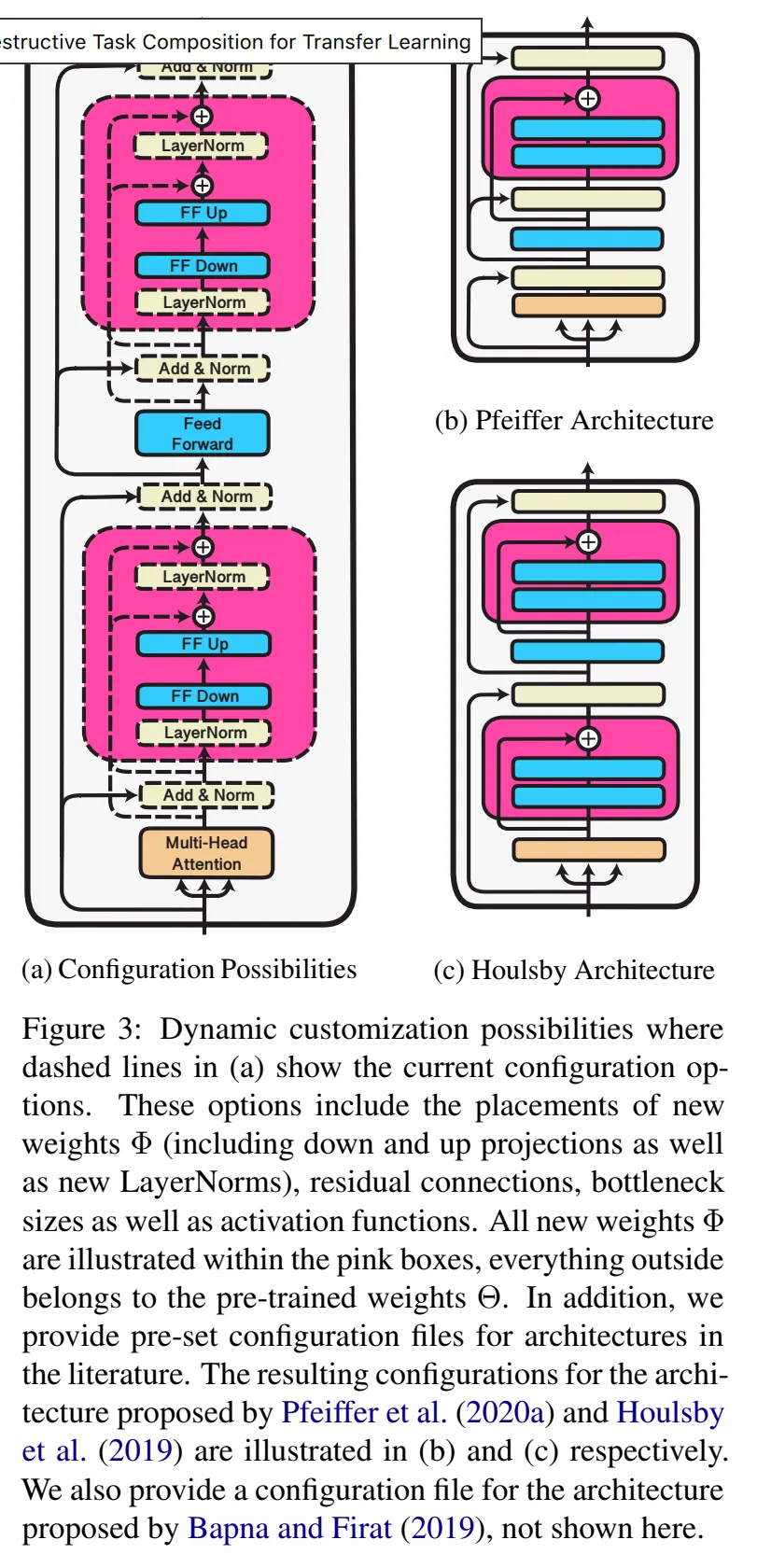

Adaptor and AdaptorHub for transformers

To finetune a transformer based model that suit your need doesn't necessary can be more sufficient that you can ever thought. Sometimes, training on a part of it has already done most of the job.

-

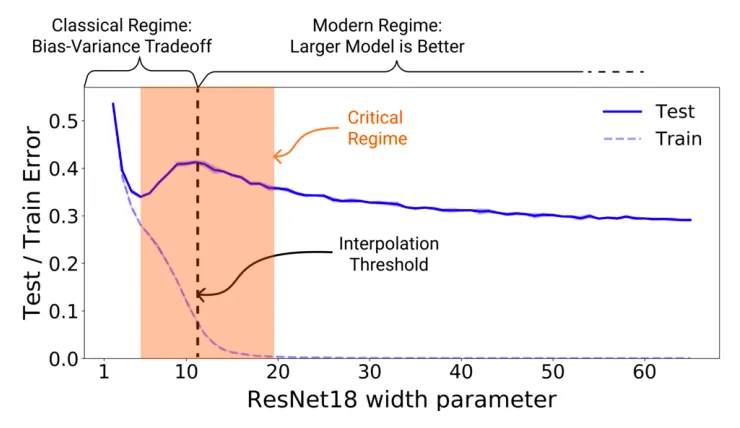

Double Descent or Bias-Variance Trade-off? Here is what you need

Over-parametrization has been a pitfall to a model if there is only limited feature with limited data. But now, it seems over-parametrization is the hope of the next generation AI model.

-

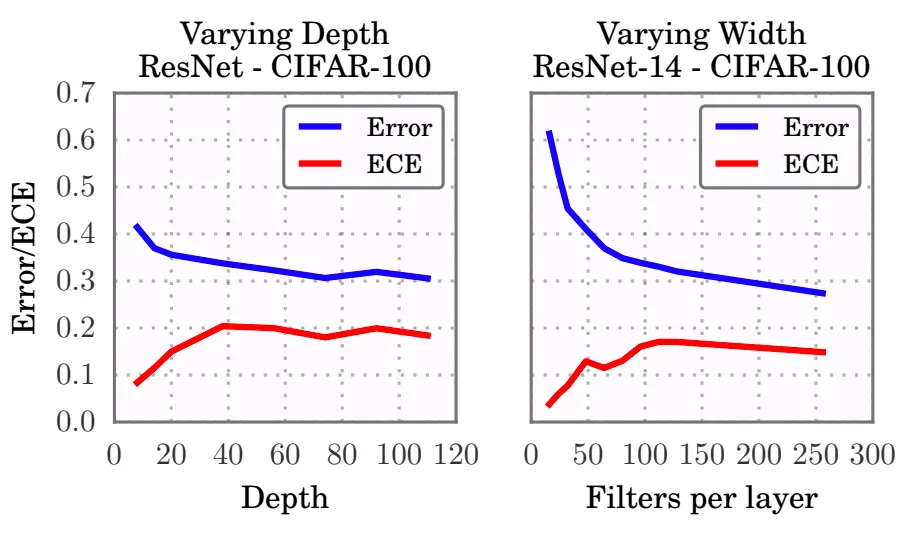

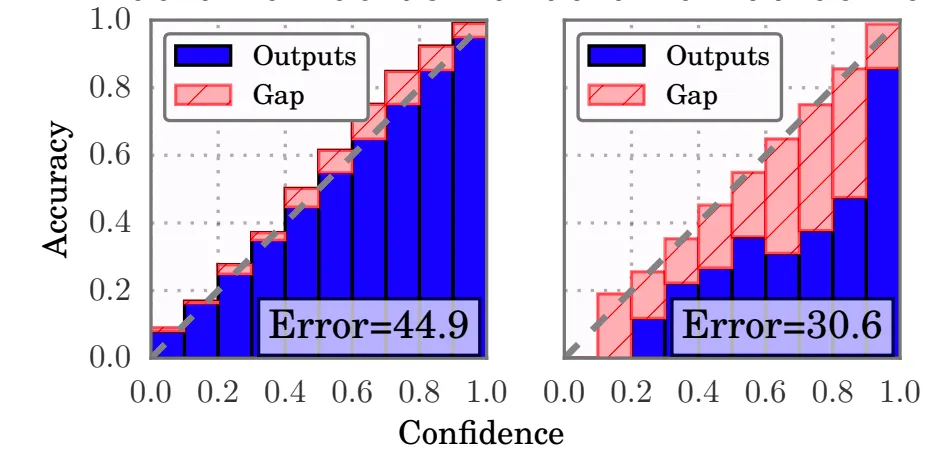

More about Model Calibration

Where is the source of misalignment problem of model prediction with accuracy? Here will unveil the truth and show you the practical way to handle it.

-

Model Calibration, the thing that an expert may miss

A model being accurate doesn't equal to being well calibrated. A uncalibrated model could bring you serious damage to your business value in production.